|

4/28/2023 0 Comments Azure data studio import csvQuick creation in the Azure Data Factory Studio. You’ll probably get another error at first, and have to add another IP address to the approved list for the database server.Īfter pressing “Debug” again, now you should hopefully get a successful run of the copy activity.īack in the Query Editor for the database, a SELECT statement should show that data from the csv held on Azure Blob Storage has now been imported into the Azure SQL Database table, using Data Factory.25 ต.ค. Try the connection again, and hopefully it succeeds now.īack in the Data Factory pipeline, hit “Debug”. If the IP address is dynamic, you may want to use the whole IP range… The IP address given in the error message will need to be added to the approved list of the server that contains the database you’re connecting to. I’m using the same “admin” account for simplicity here, although in a real environment it may be preferable to use a separate account.Ĭlicking the “Test connection” button should result in an error message at first. You’ll need to provide authentication details too. Specify the connection details to the Azure SQL Database that you created earlier.

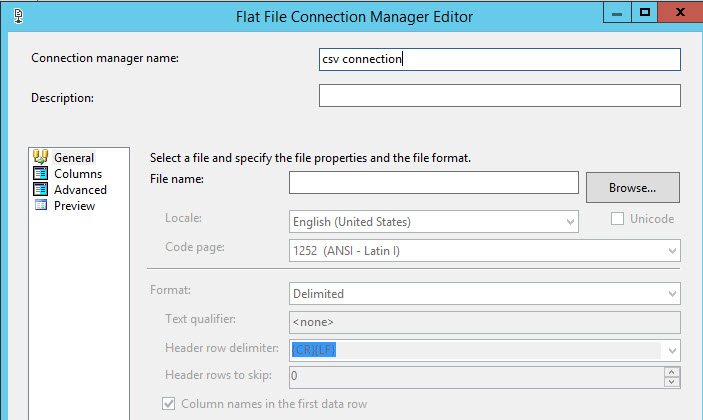

You have the option to “preview data”, to check things look OK.įollowing a very similar method to create a destination, select the “Sink” tab of the “Copy data” activity, then “+ New”, and choose “Azure SQL Database” from the options that appear. Specify the exact file within the store that you want to import data from. Once the linked service has been created, select “Open” next to the source dataset. Point the linked service to the Storage Account that you created previously. Give the dataset a name (“LFData_Import”), and create a new linked service. Hit “continue”, and then “DelimitedText” for the file format: On the “New dataset” menu that appears, select “Azure Blob Storage” Go to “Source” for the “Copy data” activity, and “+ New”.

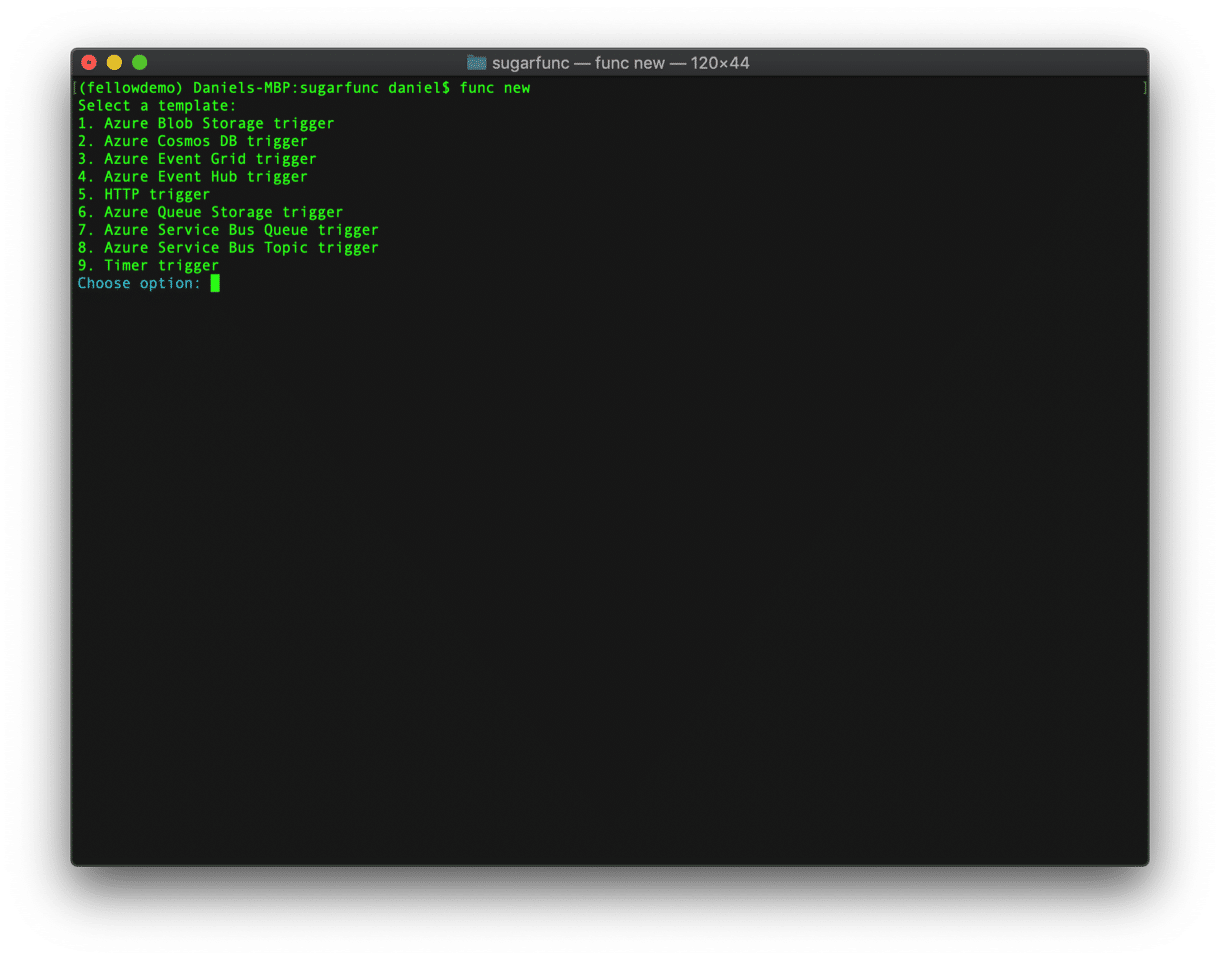

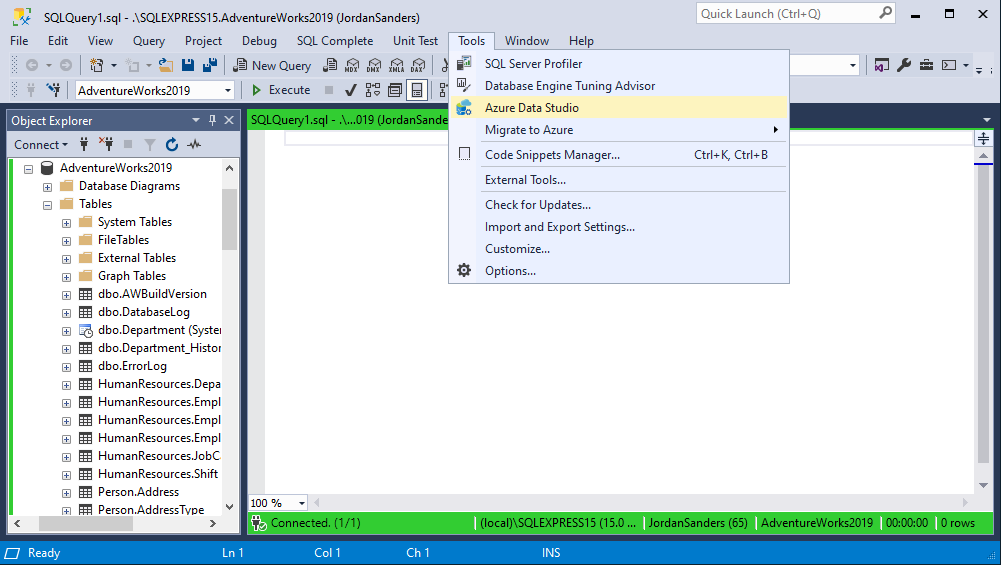

Once the new resource has been created, click on it, and select “Open Azure Data Factory Studio” from the “Get started” section.įrom the “Move & transform” menu, drag “copy data” over to the pipeline canvas. Then from the integration menu, choose “Data Factory”.Ĭreate a Data Factory instance inside of the Resource Group. The table should now be visible in the object explorer on the left: Create the Azure Data Factory Pipelineĭive into the new Resource Group and click “create a resource”. Write a simple query to create a basic table (which will need to match the schema of the data you’re importing), and click “Run”. Login using the SQL account / password you’ve just created:

Within that database, select “Query editor” from the menu on the left: Under “Firewall rules”, add the current client IP address to the approved list.Ĭlick “Review + Create”, and then you’ll be taken to a “Deployment in progress” screen, which hopefully results in a successfully-created new database.

You’ll need to specify a SQL or AD account to use for authentication.īack in the “Create SQL Database” wizard, go to the “networking” tab and allow connectivity through a public endpoint. If you don’t have a server set up already, click “Create new” under the “Server” dropdown, and complete the form below. You’ll need to create a new one, as shown further below… Give the database a name (we use “DataMinister_DB”).Ĭhoose a server to put the database on. Select the Resource Group created earlier. Specify your Subscription, to be used for the database deployment.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed